Why AI is Dumb (August 25 Edition)

When I say this, I am referring to the most recent explosion of interest in the Generative AI space. That's your LLMs and GPTs. AI has existed in some form for at least the last 75 years. It covers everything from computer vision to natural language processing to machine learning and a lot of other major and minor subfields.

I am not a Generative AI/LLM researcher by any means. So take what I have to say with a grain of salt. What I am presenting today is a collection of observations, frustrations, and thoughts.

I fully acknowledge that what we have today represents a lot of progress. I can also see that a good percentage of people's enthusiasm for AI is driven by hype. In my own life, I am trying to exploit its promise as much as possible so I can improve the quality of the work I produce and the decisions I make and give myself more free time etc. It is almost unthinkable for me today that I won't use AI for my work. Not that I could not do it. I did fine without AI for over 20 years. And it's not perfect in any shape and form. I'd throw something at the tools and agents to see if it can produce something that resembles intelligence. More often than not, it would be wrong or lacking. Then I have to decide if I want to fight with the model or just do it manually myself. Despite it's shortcomings, it's become part of my habit and way of working in a very short amount of time.

I feel that in the middle of all the hype, people aren't talking about these four things enough:

It is squashing critical thinking

As I write this post, I am being plagued by autocomplete. I am using a speech to text tool to write these paragraphs. As I am doing so, it is fixing grammar mistakes that are caused by pauses in my speech due to my slow thinking which voice engine is struggling to interpret.

It is also trying to suggest that I write something, very very similar to a lot of opinions I have read recently. Maybe they have all been written by GPTs. Maybe my GPTs have been trained on those data. Maybe that is all there is to say about this topic. Maybe it's been trained to push an agenda.

However, if I did not storyboard this first completely offline first using pen and paper, I could easily see how it could alter my thinking, suggesting things that everyone else is talking about that seem to make sense on the surface.

If you are familiar with the concept of "priming," the influence it will have on my thinking by suggesting various words and expressions over time will be significant. I will think more like the average AI user day by day just by continuing to use it.

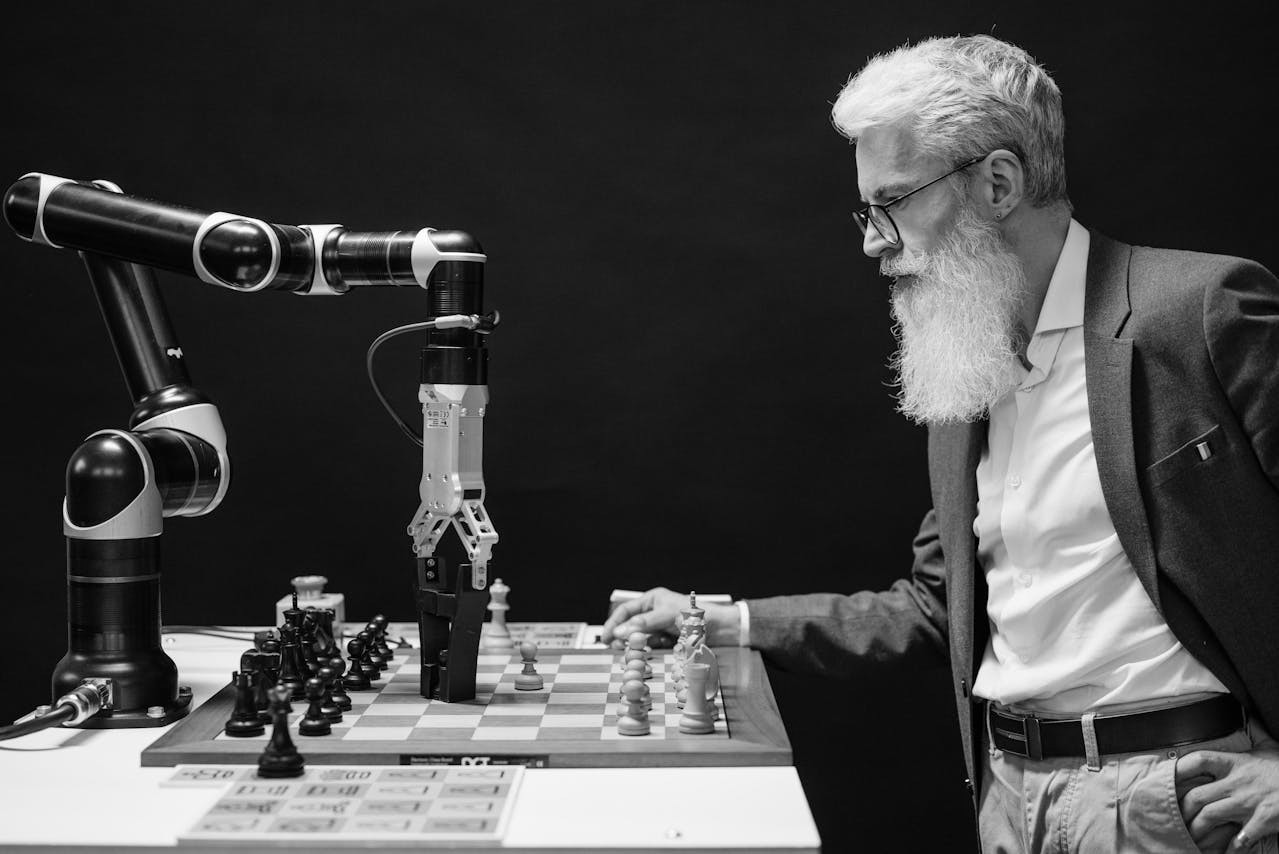

It is not intelligent

The first thought that comes to my mind when I think about the GPT paper is that it can't be intelligent. You can download it freely from OpenAI website. It is training its "LLM" by building a neural network that captures the probability of seeing a word given a previous sequence of words. It is then training it's GPT on this network's weights and training question and answers. Once again I am not an expert. But thinking logically about it, you would be training an algorithm to navigate the LLM to produce something matches training data. Only that would explain how it hallucinates and makes stuff up. If it is indeed what it's trying to do, does it really understand anything? Is this what intelligence is?

First problem I can think of is, it will be either be severely limited by training data or be hallucinating a lot. Given how these AI tools perform these days, I would say it does both. For example, think of an apple. I have a concept of an apple in my head. I understand it intellectually. For example, it's a fruit, has sugar, vitamin C etc. I understand it biologically. For example, how I should eat an apple, how it tastes, how some kid threw an apple towards this other kid during a lunchtime brawl in my primary school. There is a thousand different things and experiences that have shaped my understanding of what an apple is from being a delicious snack to a weapon. Do people write about this stuff for it to end up at ChatGPT's footsteps as training data. Most probably not. People don't write about their implicit knowledge and experiences. It is automatic and bringing unconscious things to surface is difficult. So it would either not be able to produce those derivations or would learn to derive this from some other context and try to potentially falsely apply it.

Second problem is probably language. When I want to communicate that concept in english, I say "Apple". When I want to communicate that concept in Japanese, I say "りんご" (ringo). However, does this learning approach essentially mean that the model will have many different concepts of an apple, maybe one for each language. Or will it have a single concept of apple and training involves soullessly translating it all into english for example? If you know more than one languages, you can relate how you lose subtlety and contextual meaning when you translate concepts from one language to another.

Third problem is, so much of our intelligence is not captured in language. For example, if I am going to propose something in a meeting, I will be reading the room, looking at people's body language, their facial expressions, their tone of voice, judge their receptiveness, use my understanding of what they know and care about and so on. I will be adjusting my proposal or may even not propose at all based on that feedback. It's not something we write down. It would be very hard to describe. A language model can not capture that capability, that complexity. My one and a half year old toddler who was not speaking yet, once dropped a milk tin on my wife's face to communicate that he was hungry. My wife immediately understood that he was hungry. How do you train a language model to understand that?

To give another example, who has tried to cook with LLM voice mode? One of my hobbies that has not taken off yet is coming up with recipes with ChatGPT based on what I have in pantry and dynamically adjusting recipes while I am cooking. It sort of works and it's not there yet. After a time or two, when the novelty wears off, you will become super annoyed with how repetitive and dumb it is. For example, it has no concept or understanding of time. It will automatically jump 5 steps forward that takes half an hour in 10 seconds. It will keep asking the same question over and over again even though you have answered it.

Long story short, it is just trying to predict the next word in a sequence. With enough training data, it will sound incredibly smart. But is that intelligence? Or just a very sophisticated parrot?

It is not exploiting the full potential of computing

With so much progress, I sometimes feel that it's taken a step back that no one is talking about.

For example, a little while ago I tried to use prompting to prepare my tax return. The numbers were not making sense compared to what I had manually calculated. After digging deeper I found that it had added a few numbers wrong. The results were presented as confidently accurate, but was incorrect.

My immediate reaction was, why is it not using a maths engine to do the calculations? That is one area where computers are far far superior to humans. Think of your planning algorithms for example. With the compute resource that is used to run these models, a computer can evaluate trillions of combinations of solutions that would take a human millions of lifetimes to attempt in seconds. Why is it not using its full power yet?

You can't reach AGI by indexing the internet

With this, I am going to assume that with AGI, we are trying to achieve a really smart human level intelligence. So much of our intelligence is hard wired to our survival, our instincts as primitive animals, that language will probably never be enough to capture.

That whole premise is wrong. There is no human being that knows everything. It's not physically and mentally possible. A lot of our intelligence has to do with our experience of not knowing a whole lot of things.

It's not even conceptually possible. Internet is full of conflicting information. Screw the internet, even peer reviewed scientific journals are full of conflicting information. Ask a researcher in the field and they will tell you how to navigate that space. Ask a different researcher in the field, and they might have subtly different algorithm or different sets of opinions. What heuristic are you going to give your model - that the more often something is repeated or more recent something is, the truer it is? If it's going to be trained on people's opinions, biases and propaganda, it will be as misinformed as the data it is trained on.

Final Notes

I say all this but I will also acknowledge that these tools are still incredibly useful.

No one including software engineers is involved in doing super intelligent non-derivative work 100% of the time. Researchers spend years and months conducting a single study that expands their field corpus by less than 0.1%. Engineers spend months and years tinkering and iterating on their logic and designs to produce something novel. More than 99% of time is spent doing things someone else has already done somewhere else or activities which in itself does not require high levels of critical thinking and intelligence.

I am all for getting as much help as possible for these tasks and for doing novel work faster.